Creating VMs with Terraform on OSK for ResOps¶

Assumptions¶

- Openstack CLI installed

- Name and location of openrc file is hardcoded: ~/Downloads/ResOps-openrc.sh ~/Downloads/ResOps-openrc-V2.sh

Process¶

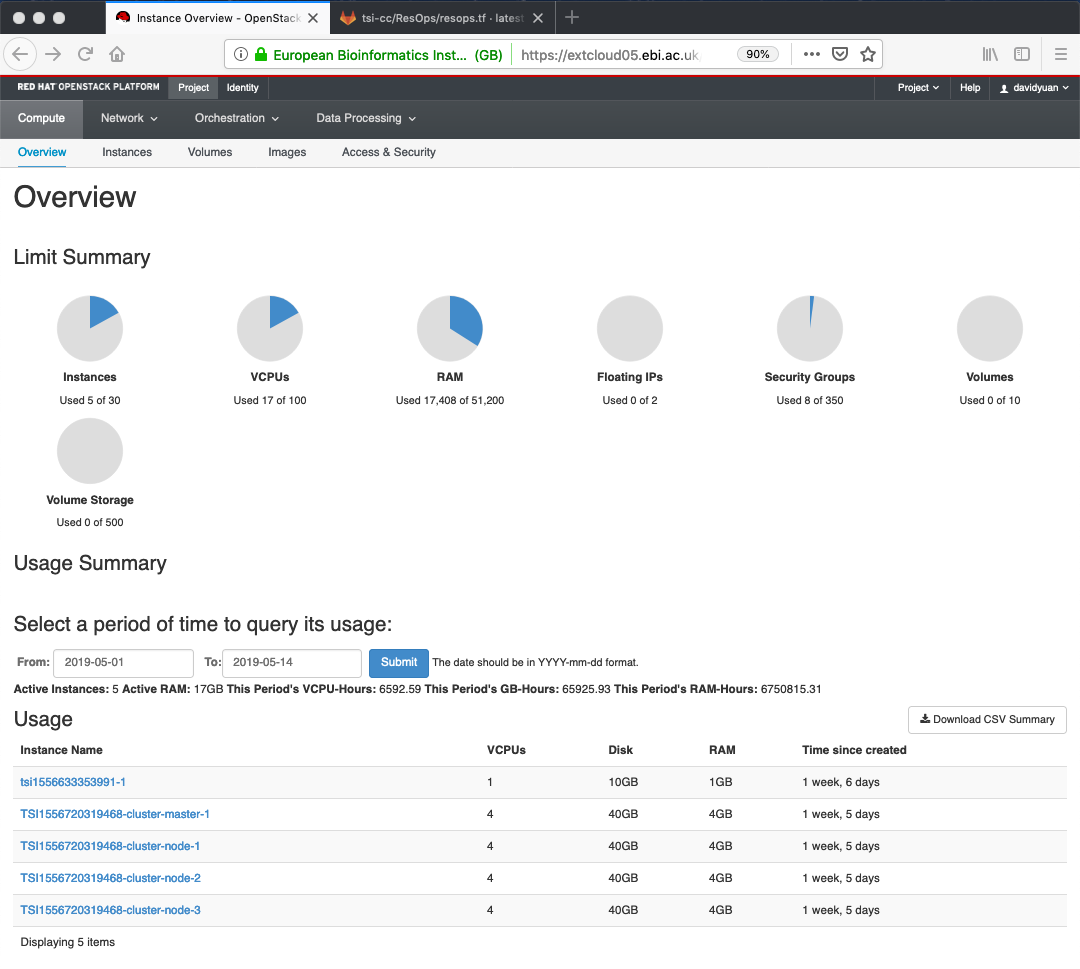

- Log onto OpenStack Horizon. You can see the overview of the tenancy, where no resops cluster is created.

Inspect variable values at https://gitlab.ebi.ac.uk/TSI/tsi-ccdoc/blob/master/tsi-cc/ResOps/scripts/kubespray/resops.tf. Make note of important variables such as cluster_name, image, network_name, floatingip_pool, number_of_bastions, number_of_k8s_masters, number_of_k8s_nodes, number_of_k8s_nodes_no_floating_ip, etc.. They describe the structure of the cluster.

Inspect sample script https://gitlab.ebi.ac.uk/TSI/tsi-ccdoc/blob/master/tsi-cc/ResOps/scripts/kubespray/terraform.sh. Note that this script can not run other than on my laptop. It assumes my ResOps-openrc.sh at certain location. Replace the Bash script as needed.

Inspect Terraform script for OpenStack under https://github.com/kubernetes-sigs/kubespray/tree/master/contrib/terraform/openstack. This script is well-written, following a lot of best practices as well as carefully modularized.

- Default values are provided in the top level variables.tf.

- The infrastructure are divided into three modules: network, ips and compute in the top level kubespray.tf.

- Each module is organized with main.tf (as required for a Terraform module), variables.tf (input) and output.tf (output). The resource description is in main.tf. The APIs can be found at https://www.terraform.io/docs/providers/openstack/index.html.

Run ~/IdeaProjects/tsi-ccdoc/tsi-cc/ResOps/scripts/kubespray/terraform.sh in a terminal window. In the end, resources are created according to ~/IdeaProjects/tsi-ccdoc/tsi-cc/ResOps/scripts/kubespray/resops.tf:

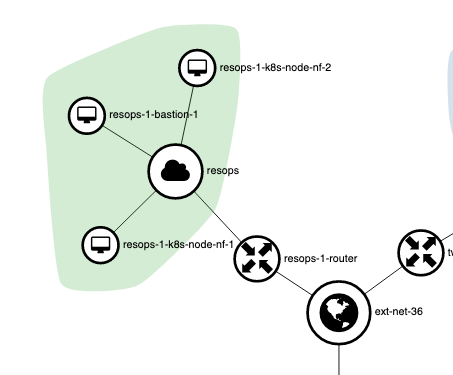

Apply complete! Resources: 20 added, 0 changed, 0 destroyed. Outputs: bastion_fips = [ 193.62.55.21 ] floating_network_id = e25c3173-bb5c-4bbc-83a7-f0551099c8cd k8s_master_fips = [] k8s_node_fips = [] private_subnet_id = e457fd0b-c02d-4287-b049-4f143a32b2fb router_id = 470c37d6-1ec6-49bd-9a9e-cc7712dfcf07Log back onto OpenStack Horizon to see VMs (a bastion, 2 nodes without floating IPs), private network / subnet (resops), router (resops-1-router) are created.

- Run ~/IdeaProjects/tsi-ccdoc/tsi-cc/ResOps/scripts/kubespray/ansipoor.sh in a terminal window. It configures VMs ready for the parcticals. It takes 6 - 7 minutes for each VM. This script requires openstack CLI installed locally. Kubespray does not provide enough information to modify VMs remotely.

- Access the VMs via SSH directly if they have public IPs attached. Otherwise, use SSH tunnel via bastion server, for example ssh -i ~/.ssh/id_rsa -o UserKnownHostsFile=/dev/null -o ProxyCommand=”ssh -W %h:%p -i ~/.ssh/id_rsa ubuntu@193.62.55.21” ubuntu@10.0.0.5

- After Docker practical and before Kubernetes practical, run ~/IdeaProjects/tsi-ccdoc/tsi-cc/ResOps/scripts/kubespray/startminikube.sh. This is to avoid users to see overwhelming number of Docker processes for Minikube in the early practical. The script configures Minikube so that users do not have to do it manually.

NOTE:

- -o UserKnownHostsFile=/dev/null disables reading from and writing to ~/.ssh/known_hosts. This opens a security hole for man-in-the-middle attack. The option -o StrictHostKeyChecking=No would not work with -o ProxyCommand as the keys need to be exchanged. It is better practice for security to edit entries in ~/.ssh/known_hosts when deuplication happens but certainly less convenient.

- You can run ~/IdeaProjects/tsi-ccdoc/tsi-cc/ResOps/scripts/kubespray/terraform.destroy.sh to remove the cluster completely.

- You can also run ~/IdeaProjects/tsi-ccdoc/tsi-cc/ResOps/scripts/kubespray/terraform.sh with different parameters in ~/IdeaProjects/tsi-ccdoc/tsi-cc/ResOps/scripts/kubespray/resops.tf to modify an existing cluster.