DevOps toolchain from Gitlab to OpenStack for pipelines on ECP¶

The toolchain for DevOps pipelines consists of the following tools:

All ECP applications are stored in Github. The projects are named as cpa_*. The commandline interface is ecp_cli.

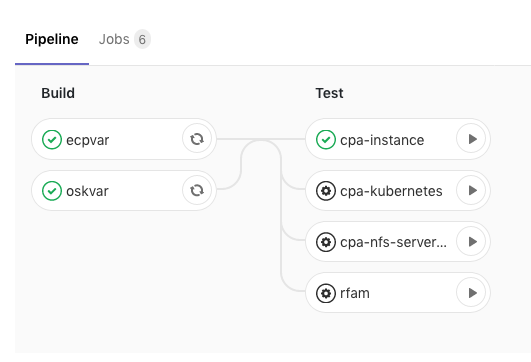

The overall workflow is show as the diagram below:

The complex logic to interact with ECP with its CLI and to interact with a cloud provider such as OSK, GCP, AWS or Azure via ECP is coded in the CI/CD Library. To create a new pipeline invoked on CI/CD Dashboard, perform the following tasks:

Adding test case onto the Dashboard¶

Create a job to be managed by the Dashboard in .gitlab0ci.yml. The token value and pipeline URL are unique to each project, for example:

cpa-instance:

stage: test

script:

- curl -X POST -F token=81ee2ce150624134ec0462845f4dda -F ref=master -F variables[os_username]="${os_username}" -F variables[os_password]="${os_password}" -F variables[ecp_user]="${ecp_user}" -F variables[ecp_password]="${ecp_password}" -F variables[app_account_name]="${app_account_name}" -F variables[config_account_name]="${config_account_name}" https://gitlab.ebi.ac.uk/api/v4/projects/731/trigger/pipeline

when: manual

Creating pipeline¶

There can be at most one pipeline for each project in GitLab. A CI/CD pipeline can be triggered by an external events such as a commit on Github or an update on Docker Hub, Cron job. It can also be triggered manually.

- To create a new cluster on GKE with a Google account on GitLab.com, click Add Kubernetes cluster. This provides a dedicated running in GCP. Otherwise, a shared runner is used by default.

- To trigger CI/CD by a commit on Github, create a new CI/CD project with an option of CI/CD for external repo. Click git Repo by URL.

- To trigger CI/CD manually, create a black project and click Set up CI/CD to start editing .gitlab-ci.yml.

Expand Pipeline triggers. Add a brief description and click Add trigger. The token and the RESTful API can be used in three different ways:

- Third party utility such as cURL to trigger the pipeline by calling the RESTful API.

- Pipeline in another project to trigger the pipeline by calling the RESTful API. This is how the Dashboard above works.

- Push or tag push events in another project to trigger the pipeline by defining a webhook in that project. This can be very handy for changes in multiple projects to trigger the same pipeline so that the integration of them are tested. The owner of these projects would have to agree to add the webhooks to notifying push or tag push events. Also, these projects need to be public or protected.

Implementing ECP test cases¶

Include the test framework for ECP CI/CD:

include:

- 'https://gitlab.ebi.ac.uk/davidyuan/cicd-lib/raw/master/lib/ecp-cicd.yaml'

Define variables at the pipeline scope:

variables:

app_name: 'Generic server instance'

ssh_key: ssh-rsa AAAAB3NzaC1yc2EAAAADAQABAAABAQDbVJdgtiojWuTmIpa6GzIVeaapJKwMb3zG7Y8iWgkhFRziLK+SfRoeg4VigFOMhpDRuMFbK/5Ui61XJ/mDGNoKFX8Zr4CZ8f+e5nzZWY/w58p5s2g2cbJcpJV249qKmlnNKQJi+qONAoIczQN4Hc7J4rqlxXwv+lH9uRZE15+O6Ughp9SZp3EY+ZhuGw1lrnXz933OygL3qQblgs2KVrSHT4TSsQrKe1dDnET55JsPlODh//xfA/WVAS5pnKIYRtaSwVzMZTzmhT70DymfXsNWX72d+N8BDlt4wOLE9EgUXog7z8akdNoXwkcF5qYDRhsjEc5KIgScBN8l6O/9uB4v

Note that app_name and ssh_key are required by ECP. In addition, id_rsa file is needed at the root of the project.

Invoke the logic to create the profile:

profile:

extends: .abstract_profile

variables:

floating_ip_pool: ext-net-37

machine_type: s1.small

network_name: EBI-TSI-davidyuan_data_private

disk_image_name: centos7

cloud_provider: OSTACK

os_project_name: EBI-TSI-davidyuan

os_project_id: 7c1d8ce04aed460b88d87d4eb20d51fd

os_auth_url: 'https://extcloud06.ebi.ac.uk:13000/v3'

os_identity_api_version: 3

os_region_name: regionOne

os_user_domain_name: Default

It is intentional to hard-code the default config_name, param_name and cred_name in the library. This is to prevent the proliferation of ECP profiles.

Invoke the logic to deploy CPA:

deployment:

extends: .abstract_deployment

variables:

APP: >

{

"repoUri": "https://github.com/EMBL-EBI-TSI/cpa-instance"

}

DPMT: >

{

"applicationName": "${app_name}",

"applicationAccountUsername": "${app_account_name}",

"configurationAccountUsername": "${config_account_name}",

"attachedVolumes": [],

"assignedInputs": [],

"assignedParameters": [],

"configurationName": "${config_name}"

}

#when: manual

Provide JSON input for repoUri and deployment descriptor for the deployment job defined in the library.

Create and invoke the logic to test the workload:

workload:

extends: .abstract_workload

script:

- deployment_id=$(cat ${ARTIFACT_DIR}/deployment.json)

- deployment_id=$(ecputil getDeploymentId "${deployment_id}")

# accessIp may be null in deployment.json if instance was not deployed yet

- data=$(ecp get deployment ${deployment_id} -j)

- ip=$(ecputil getAccessIp "${data}")

# Over simplified test case "df -h" on the new VM

- ssh -o StrictHostKeyChecking=No -i ${ID_RSA_FILE} centos@${ip} "df -h" > ${ARTIFACT_DIR}/workload.log

- ecp 'login' '-r'

when: delayed

start_in: 1 minutes

retry:

max: 2

when:

- script_failure

#when: manual

dependencies:

- deployment

The logic to test a workload is highly specific. The job in the library is just a scalaton.

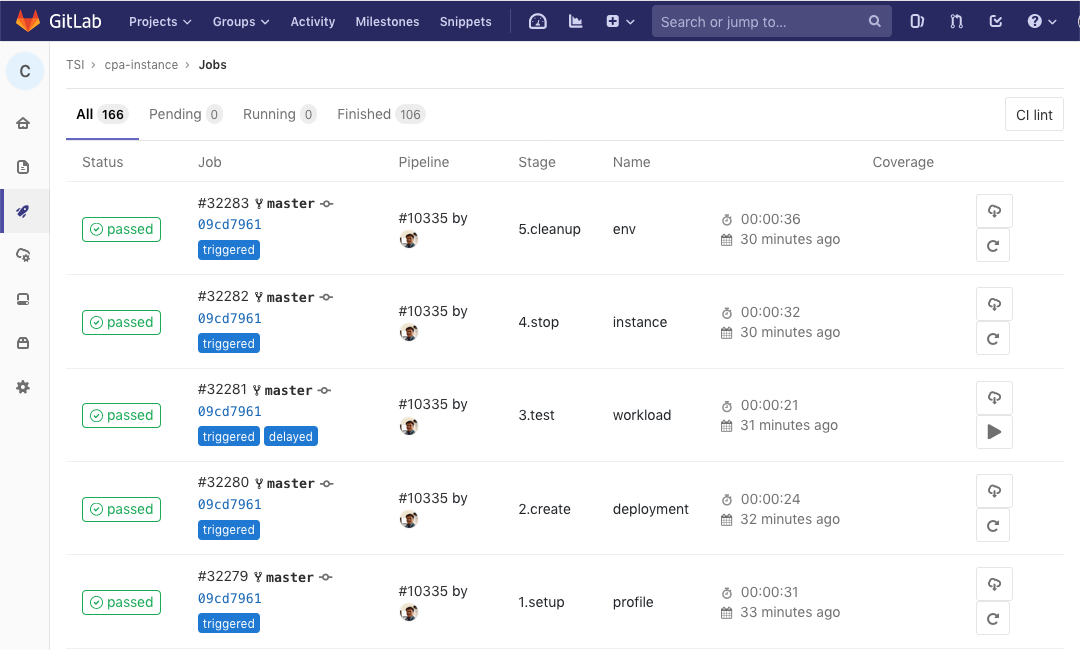

Once the pipeline files .gitlab-ci.yml are created or updated, use the Dashboard to run the test cases.

Downloading and viewing logs¶

Logs and intermediate results are exposed on GitLab if the files are stored under ARTIFACT_DIR. They can be downloaded for further analysis. This also means that the sensitive data should never be included as artifacts without encryption.

There is a run / rerun or download button beside each job or pipeline as shown below.